Wipefs raspbian8/13/2023

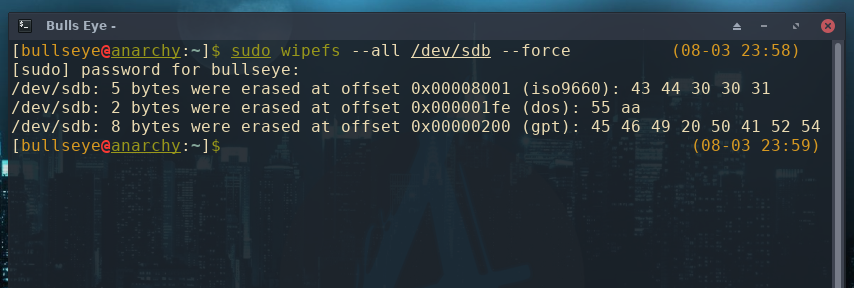

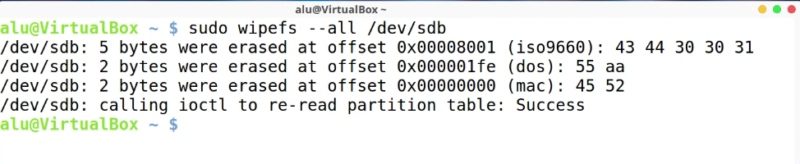

This is the kind of project I like as they are running blockchain to offer tangible services in the real world and competing with existing business in a new way. See the kernel documentation for bcache for more detail and usage examples.Storj is a blockchain in competition with cloud providers like Amazon to offer a decentralized data storage managed over a blockchain. Then if you want to use the device for something else, wipe it with wipefs: # wipefs -a /dev/nvme0n1 # echo 1 > stop # Prints a 'cache_set_free ' message in `dmesg` log # echo 1 > detach # Prints a 'cached_dev_detach_finish' message in `dmesg` log If you want to pop the SSD off of the backing device, and use it again for other purposes, you have to first de-register it (otherwise you'll get errors like probing initialization failed: Device or resource busy): $ sudo su => /sys/block/bcache0/bcache/stats_total/bypassed /sys/block/bcache0/bcache/stats_total/cache_bypass_hits /sys/block/bcache0/bcache/stats_total/cache_bypass_misses /sys/block/bcache0/bcache/stats_total/cache_hit_ratio /sys/block/bcache0/bcache/stats_total/cache_hits /sys/block/bcache0/bcache/stats_total/cache_miss_collisions /sys/block/bcache0/bcache/stats_total/cache_misses /sys/block/bcache0/bcache/stats_total/cache_readaheads /sys/block/bcache0/bcache/cache_mode' You can check the stats from bcache with: $ tail /sys/block/bcache0/bcache/stats_total/* But for RAID arrays I typically let it go full blast on first initialization, because I don't like relying on ext4lazyinit on a RAID array-it can take days at its reduced rate, and affect RAID performance that whole time! Getting stats To avoid the initialization when making the filesystem, you can omit the -E option entirely. To actually use the device, I formatted it and mounted it to /mnt: $ sudo mkfs.ext4 -E lazy_itable_init=0,lazy_journal_init=0 /dev/bcache0 The UUID in the echo command above comes from the 'Set UUID' output from the make-bcache -C command earlier. I recompiled the kernel and copied my updated kernel to the Pi, then rebooted.Īt this point, I could see the bcache0 device was working: :~ $ lsblkīut if I checked on the status of the cache, it said there was no cache: :~ $ cat /sys/block/bcache0/bcache/stateĪttaching the SSD cache to the backing deviceįinally, it's time to attach the SSD cache to the backing device: $ sudo su # switch to the root user > Multiple devices driver support (RAID and LVM) I cross-compiled the Raspberry Pi Linux kernel, and when I did it, during the menuconfig portion, I selected the following option: > Device Drivers Then I tried to look in /sys/block/md0/bcache/ so I could attach the cache to the backing device, but I realized bcache isn't loaded into the default Raspberry Pi OS kernel. I then installed bcache-tools: $ sudo apt-get install bcache-toolsĪnd used make-bcache to create the backing and cache devices: $ sudo make-bcache -B /dev/md0 I created a RAID5 array with mdadm for the three hard drives, and had the raid device /dev/md0. In my case, I have three SATA hard drives: /dev/sda, /dev/sdb, and /dev/sdc.

Getting bcacheīcache is sometimes used on Linux devices to allow a more efficient SSD cache to run in front of a single or multiple slower hard drives-typically in a storage array. In it, I'm going to document how I set up bcache on a Raspberry Pi, so I could use an SSD as a cache in front of a RAID array. This is a simple guide, part of a series I'll call 'How-To Guide Without Ads'.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed